Hiera and how it works within Puppet

Hiera allows us to modify settings from modules within Puppet - for this example I will be tweaking some of the default settings from the saz/ssh module.

Let's start by firstly install hiera:

puppet module install puppet/hiera

Hiera makes use of hierarchies - for example servers in a specific location might need a paticular DNS server, however all servers might require a specific SSH configuration. These settings are defined within the hiera.yaml file:

cat /etc/puppetlabs/code/environments/production/hiera.yaml

---

version: 5

defaults:

# The default value for "datadir" is "data" under the same directory as the hiera.yaml

# file (this file)

# When specifying a datadir, make sure the directory exists.

# See https://docs.puppet.com/puppet/latest/environments.html for further details on environments.

# datadir: data

# data_hash: yaml_data

hierarchy:

- name: "Per-node data (yaml version)"

path: "nodes/%{::trusted.certname}.yaml"

- name: "Other YAML hierarchy levels"

paths:

- "common.yaml"

We're going to modify the heirarchy a little - so let's back it up firstly:

cp /etc/puppetlabs/code/environments/production/hiera.yaml /etc/puppetlabs/code/environments/production/hiera.yaml.bak

and replace it with:

---

version: 5

defaults:

# The default value for "datadir" is "data" under the same directory as the hiera.yaml

# file (this file)

# When specifying a datadir, make sure the directory exists.

# See https://docs.puppet.com/puppet/latest/environments.html for further details on environments.

# datadir: data

# data_hash: yaml_data

hierarchy:

- name: "Per-Node"

path: "nodes/%{::trusted.certname}.yaml"

- name: "Operating System"

path: "os/%{osfamily}.yaml"

- name: "Defaults"

paths:

- "common.yaml"

We now have the ability to set OS specific settings - for example some (older) operating systems might not support specific cipher suites.

Let's run the following on our client to identify what Puppet classifies it as:

facter | grep family

family => "RedHat",

So let's create the relevent structure:

touch /etc/puppetlabs/code/environments/production/data/os/RedHat.yaml

touch /etc/puppetlabs/code/environments/production/data/os/Debian.yaml

touch /etc/puppetlabs/code/environments/production/data/os/common.yaml

We'll proceed by installing the saz/ssh module:

puppet module install saz/ssh

In this example we will concentrate on hardening the SSH server:

cat <<EOT > /etc/puppetlabs/code/environments/production/data/common.yaml

---

ssh::storeconfigs_enabled: true

ssh::server_options:

Protocol: '2'

ListenAddress:

- '127.0.0.0'

- '%{::hostname}'

PasswordAuthentication: 'no'

SyslogFacility: 'AUTHPRIV'

HostbasedAuthentication: 'no'

PubkeyAuthentication: 'yes'

UsePAM: 'yes'

X11Forwarding: 'no'

ClientAliveInterval: '300'

ClientAliveCountMax: '0'

IgnoreRhosts: 'yes'

PermitEmptyPasswords: 'no'

StrictModes: 'yes'

AllowTcpForwarding: 'no'

EOT

We can check / test the values with:

puppet lookup ssh::server_options --merge deep --environment production --explain --node <node-name>

Finally restart the puppet server:

sudo service puppetserver restart

and poll the server from the client:

puppet client -t

Pages

▼

Wednesday, 27 December 2017

Creating files from templates with Puppet

To utilise a templates when creating new files we can issue something like: /etc/puppetlabs/code/environments/production/manifests/site.pp

file { '/etc/issue':

ensure => present,

owner => 'root',

group => 'root',

mode => 0644,

content => template($module_name/issue.erb),

}

The source / content must be stored within a puppet module - so in the case we were using Saz's SSH module - we would place the template in:

/etc/puppetlabs/code/environments/production/modules/ssh/templates

touch /etc/puppetlabs/code/environments/production/modules/ssh/templates/issue.erb

and the site.pp file would look something like:

file { '/etc/issue':

ensure => present,

owner => 'root',

group => 'root',

mode => 0644,

content => template($ssh/issue.erb),

}

Reload the puppet server:

sudo service puppetserver reload

and pull down the configuration on the client:

puppet agent -t

file { '/etc/issue':

ensure => present,

owner => 'root',

group => 'root',

mode => 0644,

content => template($module_name/issue.erb),

}

The source / content must be stored within a puppet module - so in the case we were using Saz's SSH module - we would place the template in:

/etc/puppetlabs/code/environments/production/modules/ssh/templates

touch /etc/puppetlabs/code/environments/production/modules/ssh/templates/issue.erb

and the site.pp file would look something like:

file { '/etc/issue':

ensure => present,

owner => 'root',

group => 'root',

mode => 0644,

content => template($ssh/issue.erb),

}

Reload the puppet server:

sudo service puppetserver reload

and pull down the configuration on the client:

puppet agent -t

Friday, 22 December 2017

Changing regional settings (locate, time zone, keyboard mappings) in CentOS 7 / RHEL

Quite often when deploying new instances of CentOS the process of setting regional settings like time is often hidden from the user behind the OS installer and more often than not it is not necessary to change these.

However this post will outline the steps that need to be taken if a server has been moved geographically or has simply not been configured correctly in the first place!

We'll start my changing the time zone - this is pretty straight forward and you can find timezone settings available to the system in:

ls -l /usr/share/zoneinfo/

In this case we'll use the GB (Great Britain) - by creating a symbolic link:

sudo rm /etc/localtime

ln -s /usr/share/zoneinfo/GB /etc/localtime

We'll also ensure NTP is installed:

sudo yum install ntp && sudo service ntpd start

sudo systemctl enable ntpd OR chkconfig ntpd on

We'll also want to change the system locale - we can view the current one with:

locale

or

cat /etc/locale.conf

and change it by firstly identifying locales available to us:

localectl list-locales

and then set it with:

localectl set-locale en_IE.utf8

or manually change locale.conf:

cat "en_IE.utf8" > /etc/locale.conf

and confirm with:

localectl status

Finally we need to change the key mappings - to view the current selection issue:

localectl list-keymaps

and then set with:

localectl set-keymap ie-UnicodeExpert

Wednesday, 20 December 2017

vi(m) Cheat Sheet

The following is a list of common commands that I will gradually compile for working with vi / vim.

Find and Replace (Current Line)

:/s/csharp/java

Find and Replace (All Line)

:$s/csharp/java

Find and Replace (Current Line)

:/s/csharp/java

Find and Replace (All Line)

Tuesday, 19 December 2017

Adding a new disk with LVM

Identify the new disk with:

lsblk

and add the disk as a physical volume:

pvcreate /dev/sdb

Verify it with:

pvdisplay

Now create a new virtual group with:

vgcreate myvg /dev/sdb

and finally a new logical volume (ensuring all space is allocated to it):

lvcreate -n mylg -l 100%FREE myvg

and verify with:

lvdisplay

and finally create a new filesystem on the volume:

mkfs.xfs /dev/myvg/mylv

lsblk

and add the disk as a physical volume:

pvcreate /dev/sdb

Verify it with:

pvdisplay

Now create a new virtual group with:

vgcreate myvg /dev/sdb

and finally a new logical volume (ensuring all space is allocated to it):

lvcreate -n mylg -l 100%FREE myvg

and verify with:

lvdisplay

and finally create a new filesystem on the volume:

mkfs.xfs /dev/myvg/mylv

Wednesday, 13 December 2017

Using pip with a Python virtual environment (venv)

A venv is a way of isolating the host environment from that of a python project. I only came across these because Pycharm decided to adopt them by default now when creating new projects.

To install additional modules using pip we must firstly enter the virtual environment - your project should look something like:

├── bin

│ ├── activate

│ ├── activate.csh

│ ├── activate.fish

│ ├── activate_this.py

│ ├── easy_install

│ ├── easy_install-3.6

│ ├── pip

│ ├── pip3

│ ├── pip3.6

│ ├── python -> python3.6

│ ├── python3 -> python3.6

│ ├── python3.6

│ ├── python-config

│ ├── watchmedo

│ └── wheel

├── include

│ └── python3.6m -> /usr/include/python3.6m

├── lib

│ └── python3.6

├── lib64 -> lib

└── pip-selfcheck.json

To install additional modules using pip we must firstly enter the virtual environment - your project should look something like:

├── bin

│ ├── activate

│ ├── activate.csh

│ ├── activate.fish

│ ├── activate_this.py

│ ├── easy_install

│ ├── easy_install-3.6

│ ├── pip

│ ├── pip3

│ ├── pip3.6

│ ├── python -> python3.6

│ ├── python3 -> python3.6

│ ├── python3.6

│ ├── python-config

│ ├── watchmedo

│ └── wheel

├── include

│ └── python3.6m -> /usr/include/python3.6m

├── lib

│ └── python3.6

├── lib64 -> lib

└── pip-selfcheck.json

In order to enter the virtual environment cd to the project directory e.g.:

cd /path/to/your/project

and then issue the following:

source venv/bin/activate

we can then use pip as usual to install modules:

pip install watchdog

vSphere Replication 6.5 Bug: 'Not Active' Status

This happened to myself when setting up a brand new vSphere lab with vSphere 6.5 and the vSphere Replication Appliance 6.5.1.

After setting up a new replicated VM I was presented with the 'Not Active' status - although there was no information presented in the tool tip.

So to dig a little deeper we can use the CLI to query the replicated VM status - but firstly we'll need to obtain the VM id number:

vim-cmd vmsvc/getallvms

and then query the state with:

vim-cmd hbrsvc/vmreplica.getState <id>

Retrieve VM running replication state:

The VM is configured for replication. Current replication state: Group: CGID-1234567-9f6e-4f09-8487-1234567890 (generation=1234567890)

Group State: full sync (0% done: checksummed 0 bytes of 1.0 TB, transferred 0 bytes of 0 bytes)

So it looks like it's at least attempting to perform the replication - however is stuck at 0% - so now devling into the logs:

cat /var/log/vmkernel.log | grep Hbr

2017-12-13T10:12:18.983Z cpu21:17841592)WARNING: Hbr: 4573: Failed to establish connection to [10.11.12.13]:10000(groupID=CGID-123456-9f6e-4f09-

8487-123456): Timeout

2017-12-13T10:12:45.102Z cpu18:17806591)WARNING: Hbr: 549: Connection failed to 10.11.12.13 (groupID=CGID-123456-9f6e-4f09-8487-123456): Timeout

It looks like the ESXI host is failing to connect to 10.11.12.13 (the Virtual Replication Appliance in my case) - so we can double check this

with:

cat /dev/zero | nc -v 10.11.12.13 10000

(Fails)

However if we attempt to ping it:

ping 10.11.12.13

we get a responce - so it looks like it's a firewall issue.

I attempt to connect to the replication appliance from another server:

cat /dev/zero | nc -v 10.11.12.13 10000

Ncat: Version 7.60 ( https://nmap.org/ncat )

Ncat: Connected to 10.11.12.13:10000.

So it looks like the firewall on this specific host is blocking outbound connections on port 10000.

My suspisions were confirmed when I reviewed the firewall rules from within vCenter on the Security Profile tab of the ESXI host:

Usually the relevent firewall rules are created automatically - however this time for whatever reason they have not been - so we'll need to

proceed by creating a custom firewall rule (which unfortuantely is quite cumbersome...):

SSH into the problematic ESXI host and create a new firewall config with:

touch /etc/vmware/firewall/replication.xml

and set the relevent write permissions:

chmod 644 /etc/vmware/firewall/replication.xml

chmod +t /etc/vmware/firewall/replication.xml

vi /etc/vmware/firewall/replication.xml

<!-- Firewall configuration information for vSphere Replication -->

<ConfigRoot>

<service>

<id>vrepl</id>

<rule id='0000'>

<direction>outbound</direction>

<protocol>tcp</protocol>

<porttype>dst</porttype>

<port>

<begin>10000</begin>

<end>10010</end>

</port>

</rule>

<enabled>true</enabled>

<required>false</required>

</service>

</ConfigRoot>

Revert the permsissions with:

chmod 444 /etc/vmware/firewall/replication.xml

and restart the firewall service:

esxcli network firewall refresh

and check its there with:

esxcli network firewall ruleset list

(Make sure it's set to 'enabled' - if not you can enable it via the vSphere GUI: ESXI Host >> Configuration >> Security Profile >> Edit Firewall

Settings.)

and the rules with:

esxcli network firewall ruleset rule list | grep vrepl

Then re-check connectivity with:

cat /dev/zero | nc -v 10.11.12.13 10000

Connection to 10.0.15.151 10000 port [tcp/*] succeeded!

Looks good!

After reviewing the vSphere Replication monitor everything had started syncing again.

Sources:

https://kb.vmware.com/s/article/2008226

https://kb.vmware.com/s/article/2059893

After setting up a new replicated VM I was presented with the 'Not Active' status - although there was no information presented in the tool tip.

So to dig a little deeper we can use the CLI to query the replicated VM status - but firstly we'll need to obtain the VM id number:

vim-cmd vmsvc/getallvms

and then query the state with:

vim-cmd hbrsvc/vmreplica.getState <id>

Retrieve VM running replication state:

The VM is configured for replication. Current replication state: Group: CGID-1234567-9f6e-4f09-8487-1234567890 (generation=1234567890)

Group State: full sync (0% done: checksummed 0 bytes of 1.0 TB, transferred 0 bytes of 0 bytes)

So it looks like it's at least attempting to perform the replication - however is stuck at 0% - so now devling into the logs:

cat /var/log/vmkernel.log | grep Hbr

2017-12-13T10:12:18.983Z cpu21:17841592)WARNING: Hbr: 4573: Failed to establish connection to [10.11.12.13]:10000(groupID=CGID-123456-9f6e-4f09-

8487-123456): Timeout

2017-12-13T10:12:45.102Z cpu18:17806591)WARNING: Hbr: 549: Connection failed to 10.11.12.13 (groupID=CGID-123456-9f6e-4f09-8487-123456): Timeout

It looks like the ESXI host is failing to connect to 10.11.12.13 (the Virtual Replication Appliance in my case) - so we can double check this

with:

cat /dev/zero | nc -v 10.11.12.13 10000

(Fails)

However if we attempt to ping it:

ping 10.11.12.13

we get a responce - so it looks like it's a firewall issue.

I attempt to connect to the replication appliance from another server:

cat /dev/zero | nc -v 10.11.12.13 10000

Ncat: Version 7.60 ( https://nmap.org/ncat )

Ncat: Connected to 10.11.12.13:10000.

So it looks like the firewall on this specific host is blocking outbound connections on port 10000.

My suspisions were confirmed when I reviewed the firewall rules from within vCenter on the Security Profile tab of the ESXI host:

Usually the relevent firewall rules are created automatically - however this time for whatever reason they have not been - so we'll need to

proceed by creating a custom firewall rule (which unfortuantely is quite cumbersome...):

SSH into the problematic ESXI host and create a new firewall config with:

touch /etc/vmware/firewall/replication.xml

and set the relevent write permissions:

chmod 644 /etc/vmware/firewall/replication.xml

chmod +t /etc/vmware/firewall/replication.xml

vi /etc/vmware/firewall/replication.xml

<!-- Firewall configuration information for vSphere Replication -->

<ConfigRoot>

<service>

<id>vrepl</id>

<rule id='0000'>

<direction>outbound</direction>

<protocol>tcp</protocol>

<porttype>dst</porttype>

<port>

<begin>10000</begin>

<end>10010</end>

</port>

</rule>

<enabled>true</enabled>

<required>false</required>

</service>

</ConfigRoot>

Revert the permsissions with:

chmod 444 /etc/vmware/firewall/replication.xml

and restart the firewall service:

esxcli network firewall refresh

and check its there with:

esxcli network firewall ruleset list

(Make sure it's set to 'enabled' - if not you can enable it via the vSphere GUI: ESXI Host >> Configuration >> Security Profile >> Edit Firewall

Settings.)

and the rules with:

esxcli network firewall ruleset rule list | grep vrepl

Then re-check connectivity with:

cat /dev/zero | nc -v 10.11.12.13 10000

Connection to 10.0.15.151 10000 port [tcp/*] succeeded!

Looks good!

After reviewing the vSphere Replication monitor everything had started syncing again.

Sources:

https://kb.vmware.com/s/article/2008226

https://kb.vmware.com/s/article/2059893

Friday, 8 December 2017

Change default calendar permissions of a mailbox in Exchange 2010 / 2013 / 2016

Firstly obtain the current permissions with:

Get-MailboxFolderPermission -identity "<useremail>:\Calendar"

and to change them issue something like:

Set-MailboxFolderPermission -identity "<useremail>@healix.com:\Calendar" -user Default -AccessRights Reviewer

Get-MailboxFolderPermission -identity "<useremail>:\Calendar"

and to change them issue something like:

Set-MailboxFolderPermission -identity "<useremail>@healix.com:\Calendar" -user Default -AccessRights Reviewer

Thursday, 7 December 2017

Using USB storage with ESXI / vSphere 6.0 / 6.5

In order to get USB drives working with ESXI (which is not officially supported) we'll need to ensure the USB arbitrator service has been stopped (this will unfortunately prevent you from using USB pass through devices in your VM's - however in a development environment I can afford to for go this.):

/etc/init.d/usbarbitrator stop

and ensure it is also disabled upon reboot:

chkconfig usbarbitrator off

We'll now plug the device in and identify the disk with dmesg or:

ls /dev/disks

Create a new GPT table on the disk:

partedUtil mklabel /dev/disks/mpx.vmhba37\:C0\:T0\:L0 gpt

* Note: Some disks will come up as 'naa...' as well *

We now need to identify the start / end sectors:

partedUtil getptbl /dev/disks/mpx.vmhba37\:C0\:T0\:L0

>> gpt

>> 38913 255 63 625142448

To work out the end sector we do:

[quantity-of-cylinders] * [quantity-of-heads] * [quantity-of-sectors-per-track] - 1

so: 38913 * 255 * 63 - 1

which equals:

625137344

The start sector is always 2048 - however in earlier versions of VMFS (e.g. VMFS3 - this was 128)

partedUtil setptbl /dev/disks/mpx.vmhba37\:C0\:T0\:L0 gpt "1 2048 625137344 AA31E02A400F11DB9590000C2911D1B8 0"

and finally create the filesystem:

vmkfstools -C vmfs6 -S USB-Stick /dev/disks/mpx.vmhba37\:C0\:T0\:L0:1

/etc/init.d/usbarbitrator stop

and ensure it is also disabled upon reboot:

chkconfig usbarbitrator off

We'll now plug the device in and identify the disk with dmesg or:

ls /dev/disks

Create a new GPT table on the disk:

partedUtil mklabel /dev/disks/mpx.vmhba37\:C0\:T0\:L0 gpt

* Note: Some disks will come up as 'naa...' as well *

We now need to identify the start / end sectors:

partedUtil getptbl /dev/disks/mpx.vmhba37\:C0\:T0\:L0

>> gpt

>> 38913 255 63 625142448

To work out the end sector we do:

[quantity-of-cylinders] * [quantity-of-heads] * [quantity-of-sectors-per-track] - 1

so: 38913 * 255 * 63 - 1

which equals:

625137344

The start sector is always 2048 - however in earlier versions of VMFS (e.g. VMFS3 - this was 128)

partedUtil setptbl /dev/disks/mpx.vmhba37\:C0\:T0\:L0 gpt "1 2048 625137344 AA31E02A400F11DB9590000C2911D1B8 0"

and finally create the filesystem:

vmkfstools -C vmfs6 -S USB-Stick /dev/disks/mpx.vmhba37\:C0\:T0\:L0:1

Wednesday, 6 December 2017

Quickstart: Accessing an SQLite database from the command line

We'll firstly obtain the relevant packages:

sudo dnf install sqlite

or on Debian based distro's:

sudo apt-get install sqlite3

Then open the database with:

sqlite3 /path/to/database.sqlite

To view the tables we should issue:

.tables

and to review the rows within them:

select * from <table-name>;

and to describe the table schema issue:

.schema <table-name>

to insert issue:

insert into <table-name> values('testing',123);

and to delete issue:

delete from <table-name> where <column-name> = 1;

Wednesday, 8 November 2017

Configuring Dog Tag (PKI) Certificate Authority on Fedora

After trialling several web based CA's Dog Tag was one of the few CA's I found a reasonable amount of documentation for and has readily available packages for CentOS / Fedora.

Firstly let's install the package (it's not currently available in the stable repo yet):

sudo yum --enablerepo=updates-testing install dogtag-pki 389-ds-base

We will use 389 Directory Server to create a new LDAP server instance that Dogtag can use:

sudo setup-ds.pl --silent\

General.FullMachineName=`hostname`\

General.SuiteSpotUserID=nobody\

General.SuiteSpotGroup=nobody\

slapd.ServerPort=389\

slapd.ServerIdentifier=pki-tomcat\

slapd.Suffix=dc=example,dc=com\

slapd.RootDN="cn=Directory Manager"\

slapd.RootDNPwd=yourpassword

and then create our CA subsystem with:

sudo su -

pkispawn

Subsystem (CA/KRA/OCSP/TKS/TPS) [CA]:

Tomcat:

Instance [pki-tomcat]:

HTTP port [8080]:

Secure HTTP port [8443]:

AJP port [8009]:

Management port [8005]:

Administrator:

Username [caadmin]:

Password:

Verify password:

Import certificate (Yes/No) [N]?

Export certificate to [/root/.dogtag/pki-tomcat/ca_admin.cert]:

Directory Server:

Hostname [YOURVM.LOCAL]:

Use a secure LDAPS connection (Yes/No/Quit) [N]?

LDAP Port [389]:

Bind DN [cn=Directory Manager]:

Password:

Base DN [o=pki-tomcat-CA]:

Security Domain:

Name [LOCAL Security Domain]:

Begin installation (Yes/No/Quit)? Y

Log file: /var/log/pki/pki-ca-spawn.20171108144208.log

Installing CA into /var/lib/pki/pki-tomcat.

Storing deployment configuration into /etc/sysconfig/pki/tomcat/pki-tomcat/ca/deployment.cfg.

Notice: Trust flag u is set automatically if the private key is present.

Created symlink /etc/systemd/system/multi-user.target.wants/pki-tomcatd.target → /usr/lib/systemd/system/pki-tomcatd.target.

==========================================================================

INSTALLATION SUMMARY

==========================================================================

Administrator's username: caadmin

Administrator's PKCS #12 file:

/root/.dogtag/pki-tomcat/ca_admin_cert.p12

To check the status of the subsystem:

systemctl status [email protected]

To restart the subsystem:

systemctl restart [email protected]

The URL for the subsystem is:

https://YOURVM.LOCAL:8443/ca

PKI instances will be enabled upon system boot

==========================================================================

Firstly let's install the package (it's not currently available in the stable repo yet):

sudo yum --enablerepo=updates-testing install dogtag-pki 389-ds-base

We will use 389 Directory Server to create a new LDAP server instance that Dogtag can use:

sudo setup-ds.pl --silent\

General.FullMachineName=`hostname`\

General.SuiteSpotUserID=nobody\

General.SuiteSpotGroup=nobody\

slapd.ServerPort=389\

slapd.ServerIdentifier=pki-tomcat\

slapd.Suffix=dc=example,dc=com\

slapd.RootDN="cn=Directory Manager"\

slapd.RootDNPwd=yourpassword

and then create our CA subsystem with:

sudo su -

pkispawn

Subsystem (CA/KRA/OCSP/TKS/TPS) [CA]:

Tomcat:

Instance [pki-tomcat]:

HTTP port [8080]:

Secure HTTP port [8443]:

AJP port [8009]:

Management port [8005]:

Administrator:

Username [caadmin]:

Password:

Verify password:

Import certificate (Yes/No) [N]?

Export certificate to [/root/.dogtag/pki-tomcat/ca_admin.cert]:

Directory Server:

Hostname [YOURVM.LOCAL]:

Use a secure LDAPS connection (Yes/No/Quit) [N]?

LDAP Port [389]:

Bind DN [cn=Directory Manager]:

Password:

Base DN [o=pki-tomcat-CA]:

Security Domain:

Name [LOCAL Security Domain]:

Begin installation (Yes/No/Quit)? Y

Log file: /var/log/pki/pki-ca-spawn.20171108144208.log

Installing CA into /var/lib/pki/pki-tomcat.

Storing deployment configuration into /etc/sysconfig/pki/tomcat/pki-tomcat/ca/deployment.cfg.

Notice: Trust flag u is set automatically if the private key is present.

Created symlink /etc/systemd/system/multi-user.target.wants/pki-tomcatd.target → /usr/lib/systemd/system/pki-tomcatd.target.

==========================================================================

INSTALLATION SUMMARY

==========================================================================

Administrator's username: caadmin

Administrator's PKCS #12 file:

/root/.dogtag/pki-tomcat/ca_admin_cert.p12

To check the status of the subsystem:

systemctl status [email protected]

To restart the subsystem:

systemctl restart [email protected]

The URL for the subsystem is:

https://YOURVM.LOCAL:8443/ca

PKI instances will be enabled upon system boot

==========================================================================

We can now either use the CLI or web-based interface to manage the server - however we will firstly need to ensure that the certificate we generated prior (/root/.dogtag/pki-tomcat/ca_admin.cert) is included in the Firefox certificate store:

Firefox >> Edit >> Preferences >> Advanced >> Certificates >> View Certificates >> 'Your Certificates' >> 'Import...'

Browse to the web ui: https://YOURVM.LOCAL:8443/ca

You should be presented with a certificate dialog like below:

End users can access the following URL in order to request certificates:

https://yourvm.local:8443/ca/ee/ca/

For this purposes of this tutorial we will keep things simple and generate a certificate for use on a Windows machine - so let's firstly select 'Manual Server Certificate Enrolment':

openssl genrsa -out computer.key 2048

openssl req -new -sha256 -key computer.key -out computer.csr

cat computer.csr

and paste the certificate in as below:

Hopefully then (after hitting submit) we'll see:

Now - let's head to the admin section and approve the request:

https://yourvm.local:8443/ca/agent/ca/

List Requests >> Find

Click on the request, check the details etc. and finally hit 'Approve' at the bottom.

Once it has been approved you should see a copy of the (BASE64 encoded) certificate at the bottom of the confirmation page.

Finally we'll want to package this up a long with the corresponding private key:

openssl pkcs12 -inkey computer.key -in computer.pem -export -out computer.pfx

(where 'computer.pem' is the public portion that has just been generated.)

Proceed by importing the PFX file into the Windows Computer certificate store under 'Personal'.

Sources

Fedora Project :: PKI :: Quick Start

Sources

Fedora Project :: PKI :: Quick Start

Wednesday, 1 November 2017

Implementing 802.1X with a Cisco 2960, FreeRADIUS and Windows 7 / 10

802.1X: 802.1X allows you to securely authenticate devices connecting to a network - while often employed in wireless networks it is also often used along side wired ones as well. A typical transaction will involve the authenticator (the switch in this case) sending a EAP message to the supplicant (the client workstation in this case) and will then send back an EAP response.

For this lab we will be focusing on wired networks and will be attempting to address the problem of visiting employees from company A from plugging in their equipment into Company B's infrastructure.

To start we will need the following components:

- A client machine running Windows 7 / 10 (192.168.20.2/24)

- A Cisco 2960 switch with IOS > 15 (192.168.20.1/24)

- A linux box running FreeRadius (192.168.20.254/24)

Switch

So let's firstly look at the switch portion - we'll configure dot1x and radius on the switch:

conf t

aaa new-model

radius server dot1x-auth-serv

address ipv4 192.168.20.2 auth-port 1812 acct-port 1813

timeout 3

key (7) <sharedkey>

aaa group server radius dot1x-auth

server name dot1x-auth-serv

aaa authentication dot1x default group dot1x-auth

aaa accounting dot1x default start-stop group dot1x-auth

aaa authorization network default group dot1x-auth

and proceed by enabling dot1x:

dot1x system-auth-control

and we'll then enable it on the relevant ports:

int range gi0/1-5

switchport mode access

authentication port-control auto

dot1x pae authenticator

FreeRADIUS Server

We'll continue on the server by installing radiusd:

sudo yum install freeradius

and then use samba to communicate with the domain:

sudo yum install samba samba-common samba-common-tools krb5-workstation openldap-clients policycoreutils-python

(We are not actually setting up a samba server - instead just using some of the tools that are provided with it!)

vi /etc/samba/smb.conf

[global]

workgroup = <domain-name>

security = user

winbind use default domain = no

password server = <ad-server>

realm = <domain-name>

#passdb backend = tdbsam

[homes]

comment = Home Directories

valid users = %S, %D%w%S

browseable = No

read only = No

inherit acls = Yes

and then test the configuration by running FreeRadius in test mode:

If you are not using a certificate ensure that the 'Verify the server's identity by validating the certificate' is unchecked as below (within the PEAP Settings):

Sources

https://documentation.meraki.com/MS/Access_Control/Configuring_802.1X_Wired_Authentication_on_a_Windows_7_Client

http://wiki.freeradius.org/guide/freeradius-active-directory-integration-howto

For this lab we will be focusing on wired networks and will be attempting to address the problem of visiting employees from company A from plugging in their equipment into Company B's infrastructure.

To start we will need the following components:

- A client machine running Windows 7 / 10 (192.168.20.2/24)

- A Cisco 2960 switch with IOS > 15 (192.168.20.1/24)

- A linux box running FreeRadius (192.168.20.254/24)

Switch

So let's firstly look at the switch portion - we'll configure dot1x and radius on the switch:

conf t

aaa new-model

radius server dot1x-auth-serv

address ipv4 192.168.20.2 auth-port 1812 acct-port 1813

timeout 3

key (7) <sharedkey>

aaa group server radius dot1x-auth

server name dot1x-auth-serv

aaa authentication dot1x default group dot1x-auth

aaa accounting dot1x default start-stop group dot1x-auth

aaa authorization network default group dot1x-auth

and proceed by enabling dot1x:

dot1x system-auth-control

and we'll then enable it on the relevant ports:

int range gi0/1-5

switchport mode access

authentication port-control auto

dot1x pae authenticator

FreeRADIUS Server

We'll continue on the server by installing radiusd:

sudo yum install freeradius

and then use samba to communicate with the domain:

sudo yum install samba samba-common samba-common-tools krb5-workstation openldap-clients policycoreutils-python

(We are not actually setting up a samba server - instead just using some of the tools that are provided with it!)

vi /etc/samba/smb.conf

[global]

workgroup = <domain-name>

security = user

winbind use default domain = no

password server = <ad-server>

realm = <domain-name>

#passdb backend = tdbsam

[homes]

comment = Home Directories

valid users = %S, %D%w%S

browseable = No

read only = No

inherit acls = Yes

and then edit the kerberos configuration:

vi /etc/krb5.conf

Configuration snippets may be placed in this directory as well

includedir /etc/krb5.conf.d/

[logging]

default = FILE:/var/log/krb5libs.log

kdc = FILE:/var/log/krb5kdc.log

admin_server = FILE:/var/log/kadmind.log

[libdefaults]

dns_lookup_realm = false

ticket_lifetime = 24h

renew_lifetime = 7d

forwardable = true

rdns = false

# default_realm = EXAMPLE.COM

default_ccache_name = KEYRING:persistent:%{uid}

[realms]

domain.com = {

kdc = pdc.domain.com

}

[domain_realm]

.domain.com = DOMAIN.COM

domain.com = DOMAIN.COM

and then modify NSS to ensure that it performs lookups using windbind:

vi /etc/nsswitch.conf

passwd: files winbind

shadow: files winbind

group: files winbind

protocols: files winbind

services: files winbind

netgroup: files winbind

automount: files winbind

ensure samba starts on boot and restart the system:

sudo systemctl enable smb

sudo systemctl enable winbind

sudo shutdown -r now

Now join the domain with:

net join -U <admin-user>

and attempt to authenticate against a user with:

wbinfo -a <username>%<password>

should return something like:

Could not authenticate user user%pass with plaintext password

challenge/response password authentication succeeded

We also need to ensure NTLM authentication works (as this is what is used with FreeRadius):

ntlm_auth --request-nt-key --domain=<domain-name> --username=<username>

should return:

NT_STATUS_OK: Success (0x0)

(Providing the account is in good order e.g. not locked etc.)

The 'ntlm_auth' program needs access to the 'winbind_privileged' directory - so we should ensure that the user running the radius server is within the 'wbpriv' group:

usermod -a -G wbpriv radiusd

and then proceed to install and setup freeradius:

sudo yum install freeradius

sudo systemctl enable radiusd

mv /etc/raddb/clients.conf /etc/raddb/clients.conf.orig

vi /etc/raddb/clients.conf

and add the following:

client <switch-ip> {

secret = <secret-key>

shortname = <switch-ip>

nastype = cisco

}

We'll now configure mschap:

vi /etc/raddb/mods-enabled/mschap

and ensure the following is set:

with_ntdomain_hack = yes

ntlm_auth = "/usr/bin/ntlm_auth --request-nt-key --username=%{%{Stripped-User-Name}:-%{%{User-Name}:-None}} --challenge=%{%{mschap:Challenge}:-00} --nt-response=%{%{mschap:NT-Response}:-00}"

and the EAP configuration:

sudo vi /etc/raddb/mods-available/eap

Search for the following line:

tls-config tls-common

and uncomment:

random_file = /dev/urandom

Make sure your firewall is setup correctly:

sudo iptables -A INPUT -p udp -m state --state NEW -m udp --dport 1812 -j ACCEPT

sudo iptables -A INPUT -p udp -m state --state NEW -m udp --dport 1813 -j ACCEPT

and then test the configuration by running FreeRadius in test mode:

sudo radiusd -XXX

Then on the Windows 7 / 10 client workstation ensure that the 'Wired AutoConfig' service has been started (and has also been set to 'Automatic'.)

On the relevant NIC properties ensure that 'Enable 802.1X authentication' has been enabled:

Within Windows 10 you should not need to perform this - however it's always best to check the defaults just in case!

Click 'Configure...' next to 'Secured password (EAP-MSCHAP v2) and ensure that 'Automatically use my Windows logon name and password' is ticked.

Finally hit 'OK' and back on the 'Authentication' tab - click on 'Additional settings...' and ensure 'Specify authentication mode' is ticked and is set to 'User authentication'.

We can now attempt to plug the client into the switch and with any luck we will obtain network access!

Sources

https://documentation.meraki.com/MS/Access_Control/Configuring_802.1X_Wired_Authentication_on_a_Windows_7_Client

http://wiki.freeradius.org/guide/freeradius-active-directory-integration-howto

Wednesday, 18 October 2017

SELinux: Adding a trusted directory into the httpd policy

By default on CentOS 7 / RHEL the '/var/www' directory is not permitted as part of the httpd policy - so instead we need to use semanage command in order to add this directory:

semanage fcontext -a -t httpd_sys_rw_content_t '/var/www/'

and then apply the context changes with:

restorecon -v /var/www/

you will also need to apply the context changes to any files within the directory as well e.g.:

restorecon -v /var/www/index.html

semanage fcontext -a -t httpd_sys_rw_content_t '/var/www/'

and then apply the context changes with:

restorecon -v /var/www/

you will also need to apply the context changes to any files within the directory as well e.g.:

restorecon -v /var/www/index.html

Thursday, 5 October 2017

Using Arachni Scanner with cookies / restricted areas

Below is a command line example I like to use with the Arachni Scanner - it allows you to use a session cookie (you can obtain from something like tamperdata) and ensures that specific URL's are not caled - for example logoff - which would (obviously) kill our session:

./arachni --http-cookie-string "cookie123" --scope-exclude-pattern logoff --scope-exclude-pattern login https://yourdomain.com/auth/restrictedarea/

./arachni --http-cookie-string "cookie123" --scope-exclude-pattern logoff --scope-exclude-pattern login https://yourdomain.com/auth/restrictedarea/

Thursday, 28 September 2017

Python Example: Viewing members of a group with ldap3

Although the ldap3 module for python is well documented I didn't find many good examples - so I decided to publish this one for others:

from ldap3 import Server, Connection, ALL, NTLM, SUBTREE import re# Global varsBindUser = 'domain\\username'BindPassword = '<yourpassword>'SearchGroup = 'Domain Admins'ADServer = 'dc01.domain.tld'SearchBase = 'DC=domain,DC=tld'

def getUsersInGroup(username, password, group): server = Server(ADServer) conn = Connection(server, user=username, password=password, authentication=NTLM, auto_bind=True) conn.bind() conn.search(search_base=SearchBase, search_filter='(&(objectClass=GROUP)(cn=' + group +'))', search_scope=SUBTREE, attributes=['member'], size_limit=0) result = conn.entries conn.unbind() return result def getUserDescription(username, password, userdn): server = Server(ADServer) conn = Connection(server, user=username, password=password, authentication=NTLM, auto_bind=True) conn.bind() conn.search(search_base=SearchBase, search_filter='(&(objectClass=person)(cn=' + userdn + '))', search_scope=SUBTREE, attributes=['description'], size_limit=0) result = conn.entries conn.unbind() return result

print('Querying group: ' + SearchGroup) regex_short = r" +CN=([a-zA-Z ]+)" # extracts username onlyregex_long = r" +(?:[O|C|D][U|N|C]=[a-zA-Z ]+,?)+" # extracts complete DNmatches = re.findall(regex_short, str(getUsersInGroup(username=BindUser, password=BindPassword, group=SearchGroup)))

print('Found ' + str(len(matches)) + ' users associated with this group...') for match in matches: print('Getting description for account: ' + match + '...') match_description = str(getUserDescription(username=BindUser, password=BindPassword, userdn=match)) # check if user has a valid description regex_desc = r"description:[ A-Za-z]+" if re.search(regex_desc, match_description): print(re.search(regex_desc, match_description)

Wednesday, 27 September 2017

Python ldap: AttributeError: module 'ldap' has no attribute 'open'

Uninstall the older ldap module:

sudo pip uninstall ldap

and install the newer 'python-ldap' module:

sudo pip install python-ldap

sudo pip uninstall ldap

and install the newer 'python-ldap' module:

sudo pip install python-ldap

Tuesday, 26 September 2017

Changing a puppet master certificate

In the event you want to change a puppet server's hostname you will need to also generate a new certificate and re-issue a certificate to each of it's agents.

Firstly delete the existing certificate on the puppet master:

rm -Rf /etc/puppetlabs/puppet/ssl/

and on the puppetserver / CA issue:

sudo puppet cert destroy <puppetserver.tld>

and then on the puppetserver generate a new CA with:

puppet cert generate puppetserver.int --dns_alt_names=puppetserver,puppetdb

start the server:

puppet master --no-daemonize --debug

and on each puppet agent generate a new certificate - but firstly ensure existing old CA certs etc. have been removed.

Run the following on the master:

puppet cert clean client01

and the following on the client:

sudo service puppet stop

rm -Rf /etc/puppetlabs/puppet/ssl

rm -Rf /opt/puppetlabs/puppet/cache/client_data/catalog/client01.json

sudo service puppet start

puppet agent --test --dns_alt_names=puppetserver,puppetdb

And finally sign them on the puppet

puppet cert --list

puppet cert --allow-dns-alt-names sign puppetserver.int

puppet cert --allow-dns-alt-names sign puppetagent01.int

puppet cert --allow-dns-alt-names sign puppetagent02.int

and so on...

If you are using PuppetDB you will also need to ensure it's using the latest CA cert:

rm -Rf /etc/puppetlabs/puppetdb/ssl

puppetdb ssl-setup

Firstly delete the existing certificate on the puppet master:

rm -Rf /etc/puppetlabs/puppet/ssl/

and on the puppetserver / CA issue:

sudo puppet cert destroy <puppetserver.tld>

and then on the puppetserver generate a new CA with:

puppet cert generate puppetserver.int --dns_alt_names=puppetserver,puppetdb

start the server:

puppet master --no-daemonize --debug

and on each puppet agent generate a new certificate - but firstly ensure existing old CA certs etc. have been removed.

Run the following on the master:

puppet cert clean client01

and the following on the client:

sudo service puppet stop

rm -Rf /etc/puppetlabs/puppet/ssl

rm -Rf /opt/puppetlabs/puppet/cache/client_data/catalog/client01.json

sudo service puppet start

puppet agent --test --dns_alt_names=puppetserver,puppetdb

And finally sign them on the puppet

puppet cert --list

puppet cert --allow-dns-alt-names sign puppetserver.int

puppet cert --allow-dns-alt-names sign puppetagent01.int

puppet cert --allow-dns-alt-names sign puppetagent02.int

and so on...

If you are using PuppetDB you will also need to ensure it's using the latest CA cert:

rm -Rf /etc/puppetlabs/puppetdb/ssl

puppetdb ssl-setup

Thursday, 21 September 2017

Forwarding mail for the root user to an external address

Quite often I find mail such as those generated by cron jobs are sent to the user they are executed under - for example root.

Using the 'aliases' file we can instruct any mail destined for a specific user to be forwarded to another (internal or external) address - for example by adding the following to /etc/aliases:

sudo vi /etc/aliases

root: [email protected]

and ensure those changes take effect by issuing:

sudo newaliases

and finally reloading the mail server:

sudo service postfix reload

Tuesday, 19 September 2017

Installing / setting up Samba on CentOS 7

Firslty install the required packages:

sudo dnf install samba samba-client samba-common

We'll use /mnt/backup for the directory we wish to share:

mkdir -p /mnt/backup

Make a backup copy of the existing samba configuration:

sudo cp /etc/samba/smb.conf cp /etc/samba/smb.conf.orig

and adding the following into /etc/samba/smb.conf:

[global]

workgroup = WORKGROUP

netbios name = centos

security = user

[ARCHIVE]

comment = archive share

path = /mnt/backup

public = no

valid users = samba1, @sambausers

writable = yes

browseable = yes

create mask = 0765

*NOTE*: [ARCHIVE] is the share name!

Let's proceed by creating our samba user:

groupadd sambausers

useradd samba1

usermod -G sambausers samba1

smbpasswd -a samba1

Ensure the user / group has the relevant permissions:

chgrp -R sambausers /mnt/backup

chmod -R 0770 /mnt/backup

In my case this didn't work since this directory was a USB hard drive formatted with NTFS - so instead I had to set the group, owner and permissions as part of the mounting process in fstab - my fstab line looked something like:

UUID=XXXXXXXXXXXXXXX /mnt/backup ntfs umask=0077,gid=1001,uid=0,noatime,fmask=0027,dmask=0007 0 0

This ensures the group we created has access to the directory and that normal users do not have access to the files / directories. (You'll need to replace the 'gid' by obtaining the group id with getent or doing a cat /etc/group | grep "<group-name>")

If you have SELinux enabled you will want to change the security context on the directory you wish to export:

sudo dnf -y install policycoreutils-python

sudo chcon -R -t samba_share_t /mnt/backup

sudo semanage fcontext -a -t samba_share_t /mnt/backup

sudo setsebool -P samba_enable_home_dirs on

Enable and start the relevent services:

sudo systemctl enable nmbd

sudo systemctl enable smbd

sudo systemctl start nmbd

sudo systemctl start smbd

While smbd handles the file and printer sharing services, user authentiaction and data sharing; nmbd handles NetBIOS name service requests generated by Windows machines.

Add the relevent firewall rules in:

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m udp -p udp --dport 137 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m udp -p udp --dport 138 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m tcp -p tcp --dport 139 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m tcp -p tcp --dport 445 -j ACCEPT

From a Windows client we can test the share with something like:

cmd.exe

net use \\SERVER\archive

or from *nix using the smbclient utility.

sudo dnf install samba samba-client samba-common

We'll use /mnt/backup for the directory we wish to share:

mkdir -p /mnt/backup

Make a backup copy of the existing samba configuration:

sudo cp /etc/samba/smb.conf cp /etc/samba/smb.conf.orig

and adding the following into /etc/samba/smb.conf:

[global]

workgroup = WORKGROUP

netbios name = centos

security = user

[ARCHIVE]

comment = archive share

path = /mnt/backup

public = no

valid users = samba1, @sambausers

writable = yes

browseable = yes

create mask = 0765

*NOTE*: [ARCHIVE] is the share name!

Let's proceed by creating our samba user:

groupadd sambausers

useradd samba1

usermod -G sambausers samba1

smbpasswd -a samba1

Ensure the user / group has the relevant permissions:

chgrp -R sambausers /mnt/backup

chmod -R 0770 /mnt/backup

In my case this didn't work since this directory was a USB hard drive formatted with NTFS - so instead I had to set the group, owner and permissions as part of the mounting process in fstab - my fstab line looked something like:

UUID=XXXXXXXXXXXXXXX /mnt/backup ntfs umask=0077,gid=1001,uid=0,noatime,fmask=0027,dmask=0007 0 0

This ensures the group we created has access to the directory and that normal users do not have access to the files / directories. (You'll need to replace the 'gid' by obtaining the group id with getent or doing a cat /etc/group | grep "<group-name>")

If you have SELinux enabled you will want to change the security context on the directory you wish to export:

sudo dnf -y install policycoreutils-python

sudo chcon -R -t samba_share_t /mnt/backup

sudo semanage fcontext -a -t samba_share_t /mnt/backup

sudo setsebool -P samba_enable_home_dirs on

Enable and start the relevent services:

sudo systemctl enable nmbd

sudo systemctl enable smbd

sudo systemctl start nmbd

sudo systemctl start smbd

While smbd handles the file and printer sharing services, user authentiaction and data sharing; nmbd handles NetBIOS name service requests generated by Windows machines.

Add the relevent firewall rules in:

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m udp -p udp --dport 137 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m udp -p udp --dport 138 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m tcp -p tcp --dport 139 -j ACCEPT

sudo iptables -t filter -A INPUT -i ethX -m state --state NEW -m tcp -p tcp --dport 445 -j ACCEPT

From a Windows client we can test the share with something like:

cmd.exe

net use \\SERVER\archive

or from *nix using the smbclient utility.

Erasing an MBR (or GPT) and / or partition table and data of a disk

This can performed with dd - in order to wipe the MBR (the first sector this is executed after the BIOS / hardware initialisation) we should issue:

sudo dd if=/dev/zero of=/dev/sdx bs=446 count=1

This wipes the first 446 bytes of the disk - while if we want to erase the MBR and the partition table we need to zero the first 512 bytes:

sudo dd if=/dev/zero of=/dev/sdx bs=512 count=1

And then to erase the data on the disk we can issue:

sudo dd if=/dev/zero of=/dev/sdx bs=4M count=1

Note: While strictly speaking the vast majority of modern drives actually have block sizes of 4096 bytes however the MBR and partition table are always restricted to the first 512.

GPT is slightly different - instead we need to ensure that the first 1024 bytes are zeroed:

sudo dd if=/dev/zero of=/dev/sdx bs=1024 count=1

and also be aware that a backup of the GPT table is also stored at the end of the disk - so we need to work out the last block as well.

sudo dd if=/dev/zero of=/dev/sdx bs=446 count=1

This wipes the first 446 bytes of the disk - while if we want to erase the MBR and the partition table we need to zero the first 512 bytes:

sudo dd if=/dev/zero of=/dev/sdx bs=512 count=1

And then to erase the data on the disk we can issue:

sudo dd if=/dev/zero of=/dev/sdx bs=4M count=1

Note: While strictly speaking the vast majority of modern drives actually have block sizes of 4096 bytes however the MBR and partition table are always restricted to the first 512.

GPT is slightly different - instead we need to ensure that the first 1024 bytes are zeroed:

sudo dd if=/dev/zero of=/dev/sdx bs=1024 count=1

and also be aware that a backup of the GPT table is also stored at the end of the disk - so we need to work out the last block as well.

Wednesday, 23 August 2017

Setting up DKIM for your domain / MTA

What is DKIM and how is it different to SPF?

Both DKIM and SPF provide protection for your email infrastructure.

SPF is used to prevent disallowed IP addresses from spoofing emails originating from your domain.

DKIM validates that the message was initially sent by a specific domain and ensures its integrity.

The two can (and should) be used together - since using DKIM might ensure the integrity of the email - but they can be re-sent (providing the message isn't modified) and potentially used for spam or phishing - hence employing SPF in addition ensures that whomever is re-sending the message is authorised to do so.

How does DKIM work?

DKIM (or rather the MTA) inserts a digital signature (generated with a private key) into a message that when received by another mail system is checked to ensure the authenticity of the sending domain by checking the public key via the domains DNS zone (specifically a TXT record).

Setting up DKIM

For this example we'll use the domain 'example.com'. We should firstly generate a private / public key pair for use with DKIM - this can be generated via numerous online wizards - but I'd strongly discourage this (for obvious reasons!) We'll instead uses openssl to accomplish this:

openssl genrsa -out private.key 2048

openssl rsa -in private.key -pubout -out public.key

We should also choose a 'selector' - which is an arbitrary value e.g. TA9s9D0q3164rpz

The public portion goes into a txt record in your zone file (append it to 'p=') - making sure you replace the domain 'test.com' with yours and the selector value as well!:

Name: TA9s9D0q3164rpz._domainkey.test.com

Value: k=rsa; p=123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789

and a second txt record - which indicates how DKIM is configured for your domain.

Name: _domainkey.test.com

Value: t=y;o=~;

'o=' can either be "o=-" (which states that all messages should be signed) or "o=~" (which states that only some* of the messages are signed.)

and the private portion (along with the selector and domain name) will be provided to your MTA. (This will differ dependant on your MTA.)

Validating Results

To ensure that the DKIM validation is succeeding we need to inspect the mail headers - looking specifically at the 'Authentication-Results' header:

Authentication-Results: mail.example.com;

dkim=pass [email protected];

Both DKIM and SPF provide protection for your email infrastructure.

SPF is used to prevent disallowed IP addresses from spoofing emails originating from your domain.

DKIM validates that the message was initially sent by a specific domain and ensures its integrity.

The two can (and should) be used together - since using DKIM might ensure the integrity of the email - but they can be re-sent (providing the message isn't modified) and potentially used for spam or phishing - hence employing SPF in addition ensures that whomever is re-sending the message is authorised to do so.

How does DKIM work?

DKIM (or rather the MTA) inserts a digital signature (generated with a private key) into a message that when received by another mail system is checked to ensure the authenticity of the sending domain by checking the public key via the domains DNS zone (specifically a TXT record).

Setting up DKIM

For this example we'll use the domain 'example.com'. We should firstly generate a private / public key pair for use with DKIM - this can be generated via numerous online wizards - but I'd strongly discourage this (for obvious reasons!) We'll instead uses openssl to accomplish this:

openssl genrsa -out private.key 2048

openssl rsa -in private.key -pubout -out public.key

We should also choose a 'selector' - which is an arbitrary value e.g. TA9s9D0q3164rpz

The public portion goes into a txt record in your zone file (append it to 'p=') - making sure you replace the domain 'test.com' with yours and the selector value as well!:

Name: TA9s9D0q3164rpz._domainkey.test.com

Value: k=rsa; p=123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789123456789\123456789+\123456789

and a second txt record - which indicates how DKIM is configured for your domain.

Name: _domainkey.test.com

Value: t=y;o=~;

'o=' can either be "o=-" (which states that all messages should be signed) or "o=~" (which states that only some* of the messages are signed.)

and the private portion (along with the selector and domain name) will be provided to your MTA. (This will differ dependant on your MTA.)

Validating Results

To ensure that the DKIM validation is succeeding we need to inspect the mail headers - looking specifically at the 'Authentication-Results' header:

Authentication-Results: mail.example.com;

dkim=pass [email protected];

Wednesday, 16 August 2017

Creating an internal / NAT'd network using a vSwitch on Server 2012 / 2016

We'll firstly need to install the Hyper V role - since we'll require the management tools in order to create our interface:

Install-WindowsFeature Hyper-V –IncludeManagementTools

Install-WindowsFeature Routing -IncludeManagementTools

However I had the following message returned when attempting installation:

Hyper-V cannot be installed: A hypervisor is already running.

As I was running under VMWare I had to install the feature using a slightly different method (bare in mind we have no intention of using the Hyper V hypervisor - however we do want to take advantage of the virtualized networking capabilities.

So instead I installed Hyper with:

Enable-WindowsOptionalFeature –Online -FeatureName Microsoft-Hyper-V –All -NoRestart

and the management tools with:

Install-WindowsFeature RSAT-Hyper-V-Tools -IncludeAllSubFeature

Ensure the NAT routing protocol is available to RRAS - 'Administrative Tools' >> 'Routing and Remote Access' >> Expand the following: Server, IPv4 and right hand click on general and select 'New Routing Protocol' >> Select NAT

We can now create our new virtual switch with:

New-VMSwitch -SwitchName "SwitchName" -SwitchType Internal

and assign the interface with an IP:

New-NetIPAddress -IPAddress 10.0.0.1 -PrefixLength 16 -InterfaceIndex <id>

(You can get the associated interface index with: Get-NetAdapter)

At this point you won't be able to ping any external hosts from that interface - we can verify that using the '-S' switch with ping:

ping -S 10.0.0.1 google.com

So - we'll need to enable NAT with:

New-NetNat -Name "NATNetwork" -InternalIPInterfaceAddressPrefix 10.0.0.0/16

and then attempt to ping from the interface again:

ping -S 10.0.0.1 google.com

Install-WindowsFeature Hyper-V –IncludeManagementTools

Install-WindowsFeature Routing -IncludeManagementTools

However I had the following message returned when attempting installation:

Hyper-V cannot be installed: A hypervisor is already running.

As I was running under VMWare I had to install the feature using a slightly different method (bare in mind we have no intention of using the Hyper V hypervisor - however we do want to take advantage of the virtualized networking capabilities.

So instead I installed Hyper with:

Enable-WindowsOptionalFeature –Online -FeatureName Microsoft-Hyper-V –All -NoRestart

and the management tools with:

Install-WindowsFeature RSAT-Hyper-V-Tools -IncludeAllSubFeature

Ensure the NAT routing protocol is available to RRAS - 'Administrative Tools' >> 'Routing and Remote Access' >> Expand the following: Server, IPv4 and right hand click on general and select 'New Routing Protocol' >> Select NAT

We can now create our new virtual switch with:

New-VMSwitch -SwitchName "SwitchName" -SwitchType Internal

and assign the interface with an IP:

New-NetIPAddress -IPAddress 10.0.0.1 -PrefixLength 16 -InterfaceIndex <id>

(You can get the associated interface index with: Get-NetAdapter)

At this point you won't be able to ping any external hosts from that interface - we can verify that using the '-S' switch with ping:

ping -S 10.0.0.1 google.com

So - we'll need to enable NAT with:

New-NetNat -Name "NATNetwork" -InternalIPInterfaceAddressPrefix 10.0.0.0/16

and then attempt to ping from the interface again:

ping -S 10.0.0.1 google.com

Wednesday, 9 August 2017

Useful find command examples in Linux

The below is a compilation of 'find' commands that I often use myself.

Finding files greater (or small) than 50mb

find /path/to/directory -size +50m

find /path/to/directory -size -50m

Finding files with a specific file extension

find /path/to/directory -name "prefix_*.php"

Finding files (or folders) with specific permissions

find /home -type f -perm 777

Finding files that have been changed in the last hour

find / -cmin -60

Performing an action with matched files (-exec switch)

find / -cmin -60 -exec rm {} \;

Finding files greater (or small) than 50mb

find /path/to/directory -size +50m

find /path/to/directory -size -50m

Finding files with a specific file extension

find /path/to/directory -name "prefix_*.php"

Finding files (or folders) with specific permissions

find /home -type f -perm 777

Finding files that have been changed in the last hour

find / -cmin -60

Performing an action with matched files (-exec switch)

find / -cmin -60 -exec rm {} \;

Saturday, 5 August 2017

Adding a custom / unlisted resolution in Fedora / CentOS / RHEL

Sometimes I find that xrandr doesn't always advertise all of the supported resolutions for graphic cards - this can sometimes be down to using an unofficial driver or an older one.

However in Fedora the latest drivers are usually bundled in for Intel graphics cards - unfortunately xrandr is only reporting that one resolution is available:

xrandr -q

Screen 0: minimum 320 x 200, current 1440 x 900, maximum 8192 x 8192

XWAYLAND0 connected (normal left inverted right x axis y axis)

1440x900 59.75 +

However in Fedora the latest drivers are usually bundled in for Intel graphics cards - unfortunately xrandr is only reporting that one resolution is available:

xrandr -q

Screen 0: minimum 320 x 200, current 1440 x 900, maximum 8192 x 8192

XWAYLAND0 connected (normal left inverted right x axis y axis)

1440x900 59.75 +

In order to add a custom resolution we can use the 'cvt' utility - this calculates the VESA Coordinated Video Timing modes for us.

The syntax is as follows:

cvt <width> <height> <refreshrate>

for example:

cvt 800 600 60

# 800x600 59.86 Hz (CVT 0.48M3) hsync: 37.35 kHz; pclk: 38.25 MHz

Modeline "800x600_60.00" 38.25 800 832 912 1024 600 603 607 624 -hsync +vsync

We then create a new mode with (appending the above in bold):

sudo xrandr --newmode "800x600_60.00" 38.25 800 832 912 1024 600 603 607 624 -hsync +vsync

and then adding that mode to the display (in our case this is WAYLAND0):

sudo xrandr --addmode VGA-0 800x600_60.00

and then set this mode with:

sudo xrandr --output VGA1 --mode 1280x1024_60.00

Wine: Could Not Initialize Graphics System. Make sure that your video card and driver are compatible with Direct Draw

For anyone else getting this problem when attempting to run older games on Wine - in my case this due to the graphics card not supporting the native resolution of the game (800x600) - you can check supported resolution types with:

xrandr -q

However you might be able to add custom resolutions as well.

Otherwise within the Wine configuration you will need to ensure 'Emulate a virtual desktop' is ticked and the appropriate resolution for the game is set.

xrandr -q

However you might be able to add custom resolutions as well.

Otherwise within the Wine configuration you will need to ensure 'Emulate a virtual desktop' is ticked and the appropriate resolution for the game is set.

Monday, 31 July 2017

Mac Book Air: Installing the Broadcom BCM4360 - 14E4:43A0 module on Fedora

Firstly confirm you have the appropriate hardware version (there are two for the BCM4360!)

lspci -vnn | grep Net

The 'wl' module only supports the '14e4:43a0' version.

The RPM fusion repository have kindly already packaged it up for us - so let's firstly add the repo:

sudo dnf install -y https://download1.rpmfusion.org/nonfree/fedora/rpmfusion-nonfree-release-26.noarch.rpm https://download1.rpmfusion.org/free/fedora/rpmfusion-free-release-26.noarch.rpm

sudo dnf install -y broadcom-wl kernel-devel

sudo akmods --force --kernel `uname -r` --akmod wl

sudo modprobe -a wl

lspci -vnn | grep Net

The 'wl' module only supports the '14e4:43a0' version.

The RPM fusion repository have kindly already packaged it up for us - so let's firstly add the repo:

sudo dnf install -y https://download1.rpmfusion.org/nonfree/fedora/rpmfusion-nonfree-release-26.noarch.rpm https://download1.rpmfusion.org/free/fedora/rpmfusion-free-release-26.noarch.rpm

sudo dnf install -y broadcom-wl kernel-devel

sudo akmods --force --kernel `uname -r` --akmod wl

sudo modprobe -a wl

Thursday, 27 July 2017

curl: 8 Command Line Examples

curl is a great addition to any scripter's arsenal and I tend to use it quite a lot - so I thought I would demonstrate some of its features in this post.

Post data (as parameters) to url

curl -d _username="admin" -d password="<password>" https://127.0.0.1/login.php

Ensure curl follows redirects

curl -L google.com

Limit download bandwidth (2 MB per second)

curl --limit-rate 2M -O http://speedtest.newark.linode.com/100MB-newark.bin

Perform basic authentication

curl -u username:password https://localhost/restrictedarea

Enabling debug mode

curl -v https://localhost/file.zip

Using a HTTP proxy

curl -x proxy.squid.com:3128 https://google.com

Spoofing your user agent

curl -A "Spoofed User Agent" https://google.co.uk

Sending cookies along with a request

curl -b cookies.txt https://example.com

Wednesday, 26 July 2017

Windows Containers / Docker Networking: Inbound Communication

When working with Windows Containers I got a really bad headache trying to work out how to setup inbound communication to the container from external hosts.

To summerize my findings:

In order to allow inbound communication you will either need to use the '--expose' or '--expose' a long with '--ports' switch - each of them do slightly different things.

'--expose': When specifying this Docker will expose (make accessible) a port that is available to other containers only.

'--ports': When used in conjunction with '--expose' the port will also be available to the outside world.

Note: The above switches must be specified during the creation of a container - for example:

docker run -it --cpus 2 --memory 4G -p 80:80 --expose 80 --network=<network-id> --ip=10.0.0.254 --name <container-name> -h <container-hostname> microsoft/windowsservercore cmd.exe

If your container is on a 'transparent' (bridged) network you will not be able to specify the '-p' switch and instead if you will have to open up the relevant port on the Docker host a long with the '--expose' switch in order to make the container accessible to external hosts.

Tuesday, 25 July 2017

git: Removing sensitive information from a repository

While you can use the 'filter-branch' switch to effectively erase all trace of a file from a repository - there is a much quicker way to do this using BFG Repo-Cleaner.

Firstly grab an up to date copy of the repo with:

git pull https://github.com/user123/project.git master

Remove the file from the current branch:

git rm 'dirtyfile.txt'

Commit the changes to the local repo:

git commit -m "removal"

Push changes to the remote repo:

git push origin master

Download and execute BFG Repo-Cleaner:

cd /tmp

yum install jre-headless

wget http://repo1.maven.org/maven2/com/madgag/bfg/1.12.15/bfg-1.12.15.jar

cd /path/to/git/repo

java -jar /tmp/bfg-1.12.15.jar --delete-files dirtyfile.txt

Purge the reflog with:

git reflog expire --expire=now --all && git gc --prune=now --aggressive

Finally forcefully push changes to the remote repo:

git push origin master

Firstly grab an up to date copy of the repo with:

git pull https://github.com/user123/project.git master

Remove the file from the current branch:

git rm 'dirtyfile.txt'

Commit the changes to the local repo:

git commit -m "removal"

Push changes to the remote repo:

git push origin master

Download and execute BFG Repo-Cleaner:

cd /tmp

yum install jre-headless

wget http://repo1.maven.org/maven2/com/madgag/bfg/1.12.15/bfg-1.12.15.jar

cd /path/to/git/repo

java -jar /tmp/bfg-1.12.15.jar --delete-files dirtyfile.txt

Purge the reflog with:

git reflog expire --expire=now --all && git gc --prune=now --aggressive

Finally forcefully push changes to the remote repo:

git push origin master

Friday, 21 July 2017

Querying a PostgreSQL database

Firstly ensure your user has the adequate permissions to connect to the postgres server in pg_hba.conf.

For the purposes of this tutorial I will be using the postgres user:

sudo su - postgres

psql

\list

\connect snorby

or

psql snorby

For help we can issue:

\?

to list the databases:

\l

and to view the tables:

\dt

to get a description of the table we issue:

\d+ <table-name>

we can then query the table e.g.:

select * from <table> where <column-name> between '2017-07-19 15:31:09.444' and '2017-07-21 15:31:09.444';

and to quite:

\q

For the purposes of this tutorial I will be using the postgres user:

sudo su - postgres

psql

\list

\connect snorby

or

psql snorby

For help we can issue:

\?

to list the databases:

\l

and to view the tables:

\dt

to get a description of the table we issue:

\d+ <table-name>

we can then query the table e.g.:

select * from <table> where <column-name> between '2017-07-19 15:31:09.444' and '2017-07-21 15:31:09.444';

and to quite:

\q

Thursday, 20 July 2017

Resolved: wkhtmltopdf: cannot connect to X server

Unfortunately the latest versions of wkhtmltopdf are not headless and as a result you will need to download wkhtmltopdf version 0.12.2 in order to get it running in a CLI environment. I haven't had any luck with any other versions - but please let me know if there are any other versions confirmed working.

The other alternative is to fake an X server - however (personally) I prefer to avoid this approach.

You can download version 0.12.2 from here:

cd /tmp

wget https://github.com/wkhtmltopdf/wkhtmltopdf/releases/download/0.12.2/wkhtmltox-0.12.2_linux-centos7-amd64.rpm

rpm -i wkhtmltox-0.12.2_linux-centos7-amd64.rpm

rpm -ql wkhtmltox

/usr/local/bin/wkhtmltoimage

/usr/local/bin/wkhtmltopdf

/usr/local/include/wkhtmltox/dllbegin.inc

/usr/local/include/wkhtmltox/dllend.inc

/usr/local/include/wkhtmltox/image.h

/usr/local/include/wkhtmltox/pdf.h

/usr/local/lib/libwkhtmltox.so

/usr/local/lib/libwkhtmltox.so.0

/usr/local/lib/libwkhtmltox.so.0.12

/usr/local/lib/libwkhtmltox.so.0.12.2

/usr/local/share/man/man1/wkhtmltoimage.1.gz

/usr/local/share/man/man1/wkhtmltopdf.1.gz

The other alternative is to fake an X server - however (personally) I prefer to avoid this approach.

You can download version 0.12.2 from here:

cd /tmp

wget https://github.com/wkhtmltopdf/wkhtmltopdf/releases/download/0.12.2/wkhtmltox-0.12.2_linux-centos7-amd64.rpm

rpm -i wkhtmltox-0.12.2_linux-centos7-amd64.rpm

rpm -ql wkhtmltox

/usr/local/bin/wkhtmltoimage

/usr/local/bin/wkhtmltopdf

/usr/local/include/wkhtmltox/dllbegin.inc

/usr/local/include/wkhtmltox/dllend.inc

/usr/local/include/wkhtmltox/image.h

/usr/local/include/wkhtmltox/pdf.h

/usr/local/lib/libwkhtmltox.so

/usr/local/lib/libwkhtmltox.so.0

/usr/local/lib/libwkhtmltox.so.0.12

/usr/local/lib/libwkhtmltox.so.0.12.2

/usr/local/share/man/man1/wkhtmltoimage.1.gz

/usr/local/share/man/man1/wkhtmltopdf.1.gz

Tuesday, 18 July 2017

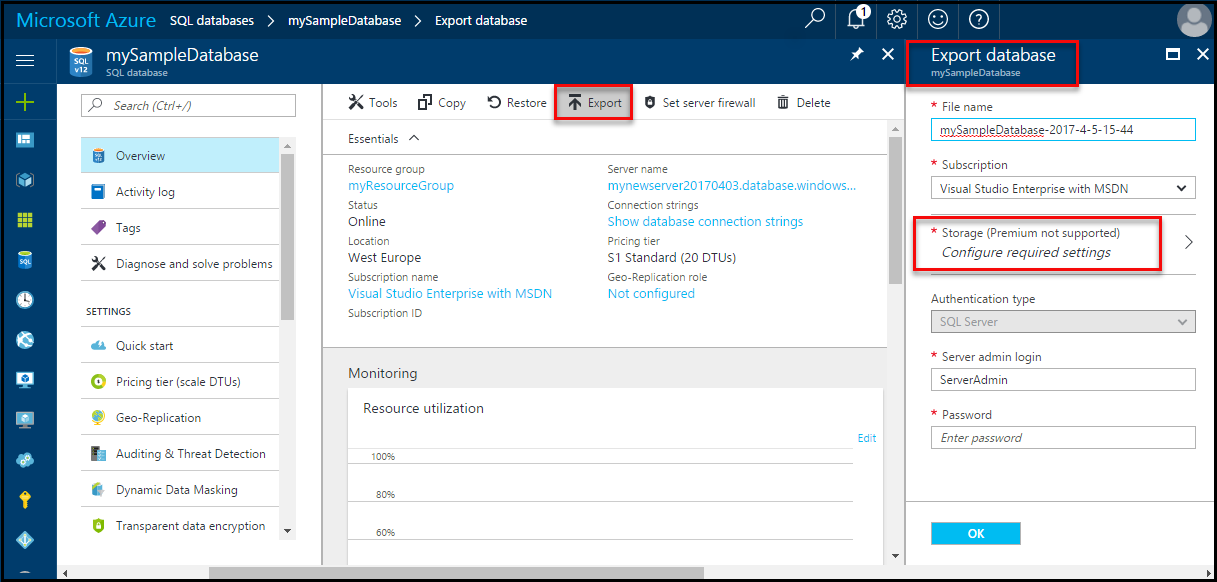

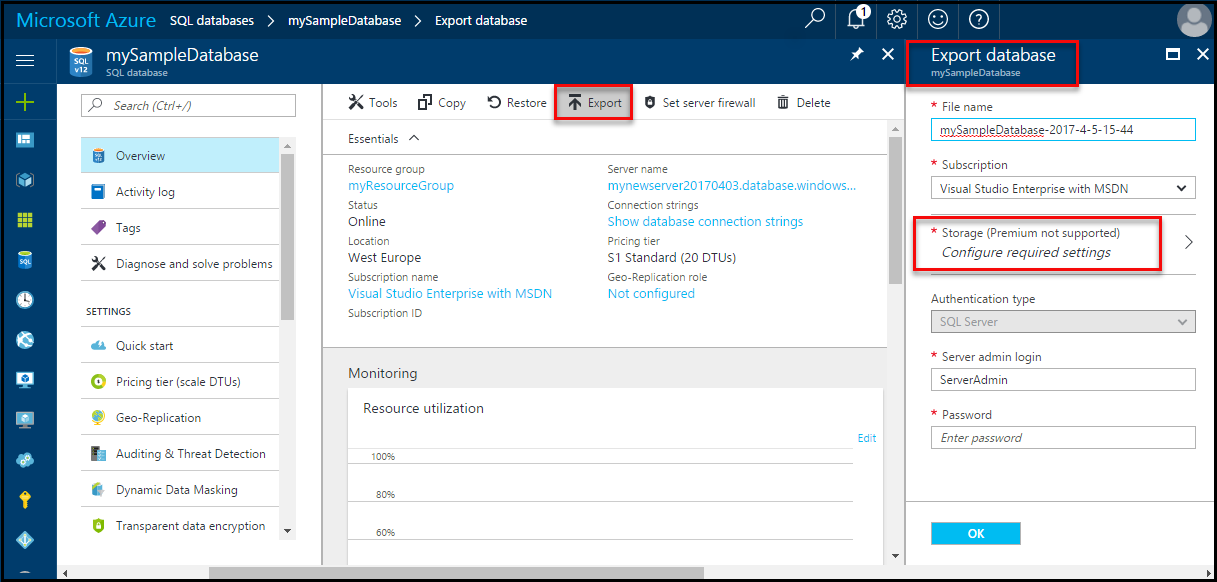

Exporting MSSQL Databases (schema and data) from Azure

Because Microsoft have disabled the ability to perform backups / exports of MSSQL databases from Azure directly from the SQL Management Studio (why?!) we now have to perform this from the Azure Portal.

A new format introduced as a 'bacpac' file allows you to store both the database schema and data within a single (compressed) file.

Open up the Resource Group in the Azure Portal, select the relevant database >> Overview >> and then select the 'Export' button:

Unfortunately these can't be downloaded directly and will need to be placed into a storage account.

If you wish to import this into another Azure Tenant or an on-premise SQL server you'll need to download the Azure Storage Explorer to download the backup.

A new format introduced as a 'bacpac' file allows you to store both the database schema and data within a single (compressed) file.

Open up the Resource Group in the Azure Portal, select the relevant database >> Overview >> and then select the 'Export' button:

Unfortunately these can't be downloaded directly and will need to be placed into a storage account.

If you wish to import this into another Azure Tenant or an on-premise SQL server you'll need to download the Azure Storage Explorer to download the backup.

Friday, 14 July 2017

A crash course on Bash / Shell scripting concepts

if statement

if [[ $1 == "123" ]]

then echo "Argument 1 equals to 123"

else

echo "Argument 1 does not equal to 123"

fi

inverted if statement

if ! [[ $1 == "123" ]]

then echo "Argument 1 does not equal to 123"

fi

regular expression (checking for number)

regex='^[0-9]+$'

if [[ $num =~ regex ]]

then echo "This is a valid number!"

fi

To be continued...

if [[ $1 == "123" ]]

then echo "Argument 1 equals to 123"

else

echo "Argument 1 does not equal to 123"

fi

inverted if statement

if ! [[ $1 == "123" ]]

then echo "Argument 1 does not equal to 123"

fi

regular expression (checking for number)

regex='^[0-9]+$'

if [[ $num =~ regex ]]

then echo "This is a valid number!"

fi

while loop

NUMBER=1

while [[ $NUMBER -le "20" ]]

do echo "The number ($NUMBER) is less than 20"

NUMBER=$((NUMBER + 1))

done

awk (separate by char)

LINE=this,is,a,test

echo "$LINE" | awk -F ',' '{print The first element is $1}'

functions

MyFunction testing123

function MyFunction {

echo $1

}

read a file

while read -r LINE

do echo "Line: $LINE"

done < /tmp/inputfile.txt

awk (separate by char)

LINE=this,is,a,test

echo "$LINE" | awk -F ',' '{print The first element is $1}'

functions

MyFunction testing123

function MyFunction {

echo $1

}

read a file

while read -r LINE

do echo "Line: $LINE"

done < /tmp/inputfile.txt

case statement

case $1 in

[1-2]*) echo "Number is between 1 and 2"

;;

[3-4]*) echo "Number is between 3 and 4"

;;

5) echo "Number is 5"

;;

6) echo "Number is 6"

;;

*) echo "Something else..."

;;

esac

for loop

for arg in $*

do echo $arg

done

arrays

myarray=(20 "test 123" 50)

myarray+=("new element")

for element in {$myarray[@]}

do echo $element

done

arrays

myarray=(20 "test 123" 50)

myarray+=("new element")

for element in {$myarray[@]}

do echo $element

done

getting user input

echo Please enter how your age:

read userinput

echo You have entered $userinput!

executing commands within bash

DATE1=`date +%Y%m%d`

echo Date1 is: $DATE1